April, 2022

2022-04-01

- I did G1GC tests on DSpace Test (linode26) to compliment the CMS tests I did yesterday

- The Discovery indexing took this long:

real 334m33.625s

user 227m51.331s

sys 3m43.037s

2022-04-04

- Start a full harvest on AReS

- Help Marianne with submit/approve access on a new collection on CGSpace

- Go back in Gaia’s batch reports to find records that she indicated for replacing on CGSpace (ie, those with better new copies, new versions, etc)

- Looking at the Solr statistics for 2022-03 on CGSpace

- I see 54.229.218.204 on Amazon AWS made 49,000 requests, some of which with this user agent:

Apache-HttpClient/4.5.9 (Java/1.8.0_322), and many others with a normal browser agent, so that’s fishy! - The DSpace agent pattern

http.?agentseems to have caught the first ones, but I’ll purge the IP ones - I see 40.77.167.80 is Bing or MSN Bot, but using a normal browser user agent, and if I search Solr for

dns:*msnbot* AND dns:*.msn.com.I see over 100,000, which is a problem I noticed a few months ago too… - I extracted the MSN Bot IPs from Solr using an IP facet, then used the

check-spider-ip-hits.shscript to purge them

- I see 54.229.218.204 on Amazon AWS made 49,000 requests, some of which with this user agent:

2022-04-10

- Start a full harvest on AReS

2022-04-13

- UptimeRobot mailed to say that CGSpace was down

- I looked and found the load at 44…

- There seem to be a lot of locks from the XMLUI:

$ psql -c 'SELECT * FROM pg_locks pl LEFT JOIN pg_stat_activity psa ON pl.pid = psa.pid;' | grep -o -E '(dspaceWeb|dspaceApi)' | sort | uniq -c | sort -n

3173 dspaceWeb

- Looking at the top IPs in nginx’s access log one IP in particular stands out:

941 66.249.66.222

1224 95.108.213.28

2074 157.90.209.76

3064 66.249.66.221

95743 185.192.69.15

- 185.192.69.15 is in the UK

- I added a block for that IP in nginx and the load went down…

2022-04-16

- Start harvest on AReS

2022-04-18

- I woke up to several notices from UptimeRobot that CGSpace had gone down and up in the night (of course I’m on holiday out of the country for Easter)

- I see there are many locks in use from the XMLUI:

$ psql -c 'SELECT * FROM pg_locks pl LEFT JOIN pg_stat_activity psa ON pl.pid = psa.pid;' | grep -o -E '(dspaceWeb|dspaceApi)' | sort | uniq -c

8932 dspaceWeb

- Looking at the top IPs making requests it seems they are Yandex, bingbot, and Googlebot:

# cat /var/log/nginx/access.log /var/log/nginx/access.log.1 | awk '{print $1}' | sort | uniq -c | sort -h

752 69.162.124.231

759 66.249.64.213

864 66.249.66.222

905 2a01:4f8:221:f::2

1013 84.33.2.97

1201 157.55.39.159

1204 157.55.39.144

1209 157.55.39.102

1217 157.55.39.161

1252 207.46.13.177

1274 157.55.39.162

2553 66.249.66.221

2941 95.108.213.28

- One IP is using a stange user agent though:

84.33.2.97 - - [18/Apr/2022:00:20:38 +0200] "GET /bitstream/handle/10568/109581/Banana_Blomme%20_2020.pdf.jpg HTTP/1.1" 404 10890 "-" "SomeRandomText"

- Overall, it seems we had 17,000 unique IPs connecting in the last nine hours (currently 9:14AM and log file rolled over at 00:00):

# cat /var/log/nginx/access.log | awk '{print $1}' | sort | uniq | wc -l

17314

- That’s a lot of unique IPs, and I see some patterns of IPs in China making ten to twenty requests each

- The ISPs I’ve seen so far are ChinaNet and China Unicom

- I extracted all the IPs from today and resolved them:

# cat /var/log/nginx/access.log | awk '{print $1}' | sort | uniq > /tmp/2022-04-18-ips.txt

$ ./ilri/resolve-addresses-geoip2.py -i /tmp/2022-04-18-ips.txt -o /tmp/2022-04-18-ips.csv

- The top ASNs by IP are:

$ csvcut -c 2 /tmp/2022-04-18-ips.csv | sed 1d | sort | uniq -c | sort -n | tail -n 10

102 GOOGLE

139 Maxihost LTDA

165 AMAZON-02

393 "China Mobile Communications Group Co., Ltd."

473 AMAZON-AES

616 China Mobile communications corporation

642 M247 Ltd

2336 HostRoyale Technologies Pvt Ltd

4556 Chinanet

5527 CHINA UNICOM China169 Backbone

$ csvcut -c 4 /tmp/2022-04-18-ips.csv | sed 1d | sort | uniq -c | sort -n | tail -n 10

139 262287

165 16509

180 204287

393 9808

473 14618

615 56041

642 9009

2156 203020

4556 4134

5527 4837

- I spot checked a few IPs from each of these and they are definitely just making bullshit requests to Discovery and HTML sitemap etc

- I will download the IP blocks for each ASN except Google and Amazon and ban them

$ wget https://asn.ipinfo.app/api/text/nginx/AS4837 https://asn.ipinfo.app/api/text/nginx/AS4134 https://asn.ipinfo.app/api/text/nginx/AS203020 https://asn.ipinfo.app/api/text/nginx/AS9009 https://asn.ipinfo.app/api/text/nginx/AS56041 https://asn.ipinfo.app/api/text/nginx/AS9808

$ cat AS* | sed -e '/^$/d' -e '/^#/d' -e '/^{/d' -e 's/deny //' -e 's/;//' | sort | uniq | wc -l

20296

- I extracted the IPv4 and IPv6 networks:

$ cat AS* | sed -e '/^$/d' -e '/^#/d' -e '/^{/d' -e 's/deny //' -e 's/;//' | grep ":" | sort > /tmp/ipv6-networks.txt

$ cat AS* | sed -e '/^$/d' -e '/^#/d' -e '/^{/d' -e 's/deny //' -e 's/;//' | grep -v ":" | sort > /tmp/ipv4-networks.txt

- I suspect we need to aggregate these networks since they are so many and nftables doesn’t like it when they overlap:

$ wc -l /tmp/ipv4-networks.txt

15464 /tmp/ipv4-networks.txt

$ aggregate6 /tmp/ipv4-networks.txt | wc -l

2781

$ wc -l /tmp/ipv6-networks.txt

4833 /tmp/ipv6-networks.txt

$ aggregate6 /tmp/ipv6-networks.txt | wc -l

338

- I deployed these lists on CGSpace, ran all updates, and rebooted the server

- This list is SURELY too broad because we will block legitimate users in China… but right now how can I discern?

- Also, I need to purge the hits from these 14,000 IPs in Solr when I get time

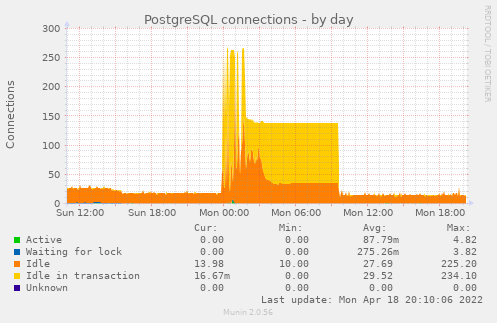

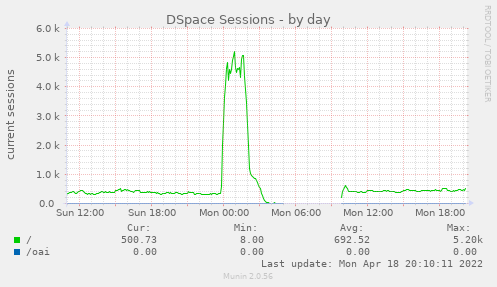

- Looking back at the Munin graphs a few hours later I see this was indeed some kind of spike that was out of the ordinary:

- I used

grepcidrwith the aggregated network lists to extract IPs matching those networks from the nginx logs for the past day:

# cat /var/log/nginx/access.log /var/log/nginx/access.log.1 | awk '{print $1}' | sort -u > /tmp/ips.log

# while read -r network; do grepcidr $network /tmp/ips.log >> /tmp/ipv4-ips.txt; done < /tmp/ipv4-networks-aggregated.txt

# while read -r network; do grepcidr $network /tmp/ips.log >> /tmp/ipv6-ips.txt; done < /tmp/ipv6-networks-aggregated.txt

# wc -l /tmp/ipv4-ips.txt

15313 /tmp/ipv4-ips.txt

# wc -l /tmp/ipv6-ips.txt

19 /tmp/ipv6-ips.txt

- Then I purged them from Solr using the

check-spider-ip-hits.sh:

$ ./ilri/check-spider-ip-hits.sh -f /tmp/ipv4-ips.txt -p

2022-04-23

- A handful of spider user agents that I identified were merged into COUNTER-Robots so I updated the ILRI override in our DSpace and regenerated the

examplefile that contains most patterns- I updated CGSpace, then ran all system updates and rebooted the host

- I also ran

dspace cleanup -vto prune the database

2022-04-24

- Start a harvest on AReS

2022-04-25

- Looking at the countries on AReS I decided to collect a list to remind Jacquie at WorldFish again about how many incorrect ones they have

- There are about sixty incorrect ones, some of which I can correct via the value mappings on AReS, but most I can’t

- I set up value mappings for seventeen countries, then sent another sixty or so to Jacquie and Salem to hopefully delete

- I notice we have over 1,000 items with region

Africa South of Sahara- I am surprised to see these because we did a mass migration to

Sub-Saharan Africain 2020-10 when we aligned to UN M.49 - Oh! It seems I used a capital O in

Of! - This is curious, I see we missed

East AsiaandNorthern America, because those are still in our list, but UN M.49 usesEastern AsiaandNorthern America… I will have to raise that with Peter and Abenet later - For now I will just re-run my fixes:

- I am surprised to see these because we did a mass migration to

$ cat /tmp/regions.csv

cg.coverage.region,correct

East Africa,Eastern Africa

West Africa,Western Africa

Southeast Asia,South-eastern Asia

South Asia,Southern Asia

Africa South of Sahara,Sub-Saharan Africa

North Africa,Northern Africa

West Asia,Western Asia

$ ./ilri/fix-metadata-values.py -i /tmp/regions.csv -db dspace -u dspace -p 'fuuu' -f cg.coverage.region -m 227 -t correct

- Then I started a new harvest on AReS

2022-04-27

- I woke up to many up down notices for CGSpace from UptimeRobot

- The server has load 111.0… sigh.

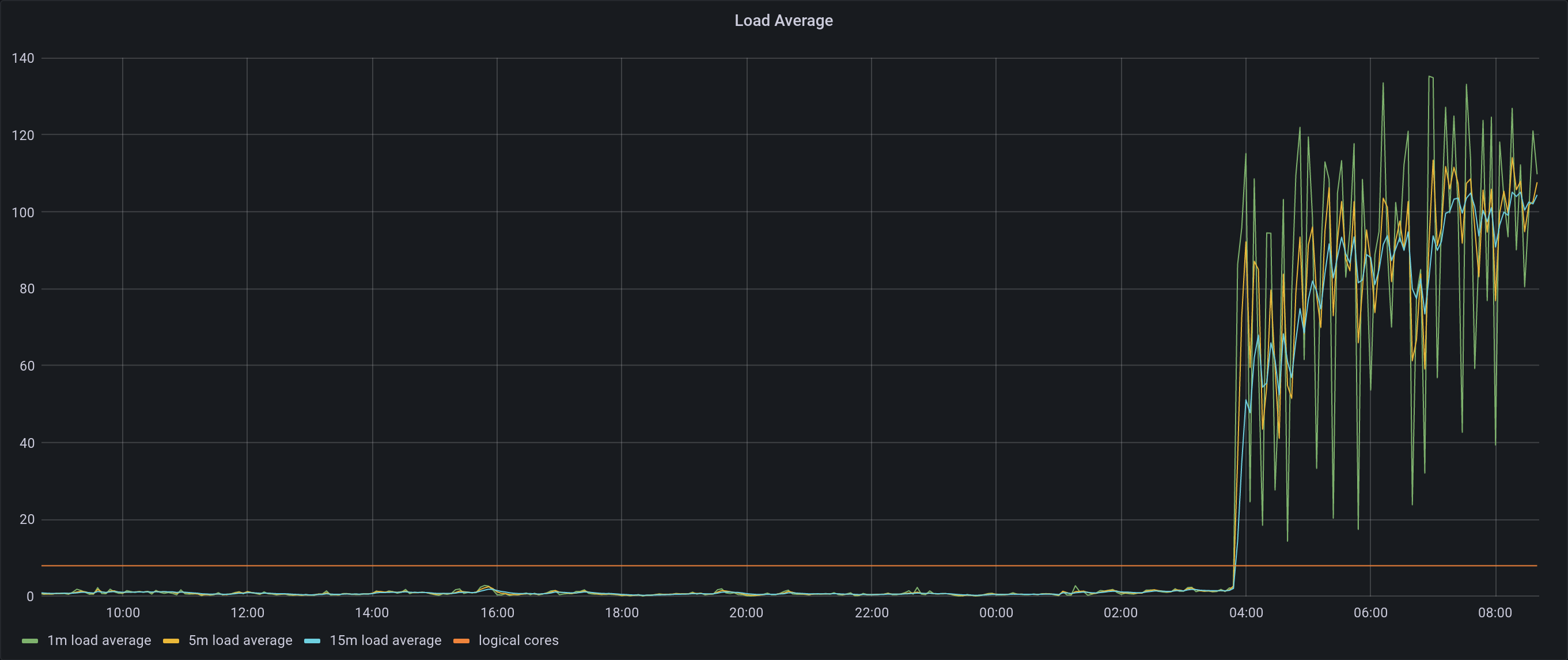

- According to Grafana it seems to have started at 4:00 AM

- There are a metric fuck ton of database locks from the XMLUI:

$ psql -c 'SELECT * FROM pg_locks pl LEFT JOIN pg_stat_activity psa ON pl.pid = psa.pid;' | grep -o -E '(dspaceWeb|dspaceApi)' | sort | uniq -c

128 dspaceApi

16890 dspaceWeb

- As for the server logs, I don’t see many IPs connecting today:

# cat /var/log/nginx/access.log | awk '{print $1}' | sort | uniq | wc -l

2924

- But there appear to be some IPs making many requests:

# cat /var/log/nginx/access.log | awk '{print $1}' | sort | uniq -c | sort -h

...

345 207.46.13.53

646 66.249.66.222

678 54.90.79.112

1529 136.243.148.249

1797 54.175.8.110

2304 174.129.118.171

2523 66.249.66.221

2632 52.73.204.196

2667 54.174.240.122

5206 35.172.193.232

5646 35.153.131.101

6373 3.85.92.145

7383 34.227.10.4

8330 100.24.63.172

8342 34.236.36.176

8369 44.200.190.111

8371 3.238.116.153

8391 18.232.101.158

8631 3.239.81.247

8634 54.82.125.225

- 54.82.125.225, 3.239.81.247, 18.232.101.158, 3.238.116.153, 44.200.190.111, 34.236.36.176, 100.24.63.172, 3.85.92.145, 35.153.131.101, 35.172.193.232, 54.174.240.122, 52.73.204.196, 174.129.118.171, 54.175.8.110, and 54.90.79.112 are all on Amazon and using this normal-looking user agent:

Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/44.0.2403.125 Safari/537.3

- None of these hosts are re-using their DSpace session ID so they are definitely not normal browsers as they are claiming:

$ grep 54.82.125.225 dspace.log.2022-04-27 | grep -oE 'session_id=[A-Z0-9]{32}:ip_addr=' | sort | uniq | wc -l

5760

$ grep 3.239.81.247 dspace.log.2022-04-27 | grep -oE 'session_id=[A-Z0-9]{32}:ip_addr=' | sort | uniq | wc -l

6053

$ grep 18.232.101.158 dspace.log.2022-04-27 | grep -oE 'session_id=[A-Z0-9]{32}:ip_addr=' | sort | uniq | wc -l

5841

$ grep 3.238.116.153 dspace.log.2022-04-27 | grep -oE 'session_id=[A-Z0-9]{32}:ip_addr=' | sort | uniq | wc -l

5887

$ grep 44.200.190.111 dspace.log.2022-04-27 | grep -oE 'session_id=[A-Z0-9]{32}:ip_addr=' | sort | uniq | wc -l

5899

...

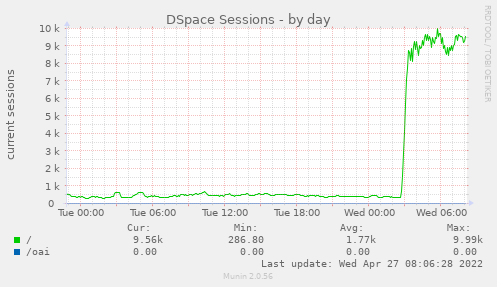

- And we can see a massive spike in sessions in Munin:

- I see the following IPs using that user agent today:

# grep 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_10_1) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/44.0.2403.125 Safari/537.36' /var/log/nginx/access.log | awk '{print $1}' | sort | uniq -c | sort -h

678 54.90.79.112

1797 54.175.8.110

2697 174.129.118.171

2765 52.73.204.196

3072 54.174.240.122

5206 35.172.193.232

5646 35.153.131.101

6783 3.85.92.145

7763 34.227.10.4

8738 100.24.63.172

8748 34.236.36.176

8787 3.238.116.153

8794 18.232.101.158

8806 44.200.190.111

9021 54.82.125.225

9027 3.239.81.247

- I added those IPs to the firewall and then purged their hits from Solr:

$ ./ilri/check-spider-ip-hits.sh -f /tmp/ips.txt -p

Purging 6024 hits from 100.24.63.172 in statistics

Purging 1719 hits from 174.129.118.171 in statistics

Purging 5972 hits from 18.232.101.158 in statistics

Purging 6053 hits from 3.238.116.153 in statistics

Purging 6228 hits from 3.239.81.247 in statistics

Purging 5305 hits from 34.227.10.4 in statistics

Purging 6002 hits from 34.236.36.176 in statistics

Purging 3908 hits from 35.153.131.101 in statistics

Purging 3692 hits from 35.172.193.232 in statistics

Purging 4525 hits from 3.85.92.145 in statistics

Purging 6048 hits from 44.200.190.111 in statistics

Purging 1942 hits from 52.73.204.196 in statistics

Purging 1944 hits from 54.174.240.122 in statistics

Purging 1264 hits from 54.175.8.110 in statistics

Purging 6117 hits from 54.82.125.225 in statistics

Purging 486 hits from 54.90.79.112 in statistics

Total number of bot hits purged: 67229

- Then I created a CSV with these IPs and reported them to AbuseIPDB.com:

$ cat /tmp/ips.csv

IP,Categories,ReportDate,Comment

100.24.63.172,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

174.129.118.171,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

18.232.101.158,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

3.238.116.153,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

3.239.81.247,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

34.227.10.4,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

34.236.36.176,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

35.153.131.101,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

35.172.193.232,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

3.85.92.145,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

44.200.190.111,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

52.73.204.196,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

54.174.240.122,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

54.175.8.110,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

54.82.125.225,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

54.90.79.112,4,2022-04-27T04:00:37-10:00,"Excessive automated HTTP requests"

- An hour or so later two more IPs on Amazon started making requests with that user agent too:

- 3.82.22.114

- 18.234.122.84

- Load on the server went back up, sigh

- I added those IPs to the firewall drop list and purged their hits from Solr as well:

$ ./ilri/check-spider-ip-hits.sh -f /tmp/ips.txt -p

Purging 2839 hits from 3.82.22.114 in statistics

Purging 592 hits from 18.234.122.84 in statistics

Total number of bot hits purged: 343

- Oh god, there are more coming

- 3.81.21.251

- 54.162.92.93

- 54.226.171.89

2022-04-28

- Had a meeting with FAO and the team from SEAFDAC, who run many repositories that are integrated with AGROVOC

- Elvi from SEAFDAC has modified the DSpace-CRIS 6.x VIAF lookup plugin to query AGROVOC

- Also, they are doing a nice integration similar to the WorldFish / MELSpace repositories where they store the AGROVOC URIs in DSpace and show the terms with an icon in the UI

- See: https://repository.seafdec.org.ph/handle/10862/6320